Relation attention network is proposed in paper: Learning to Compare: Relation Network for Few-Shot Learning. In this article, we will introduce it.

Relation attention network

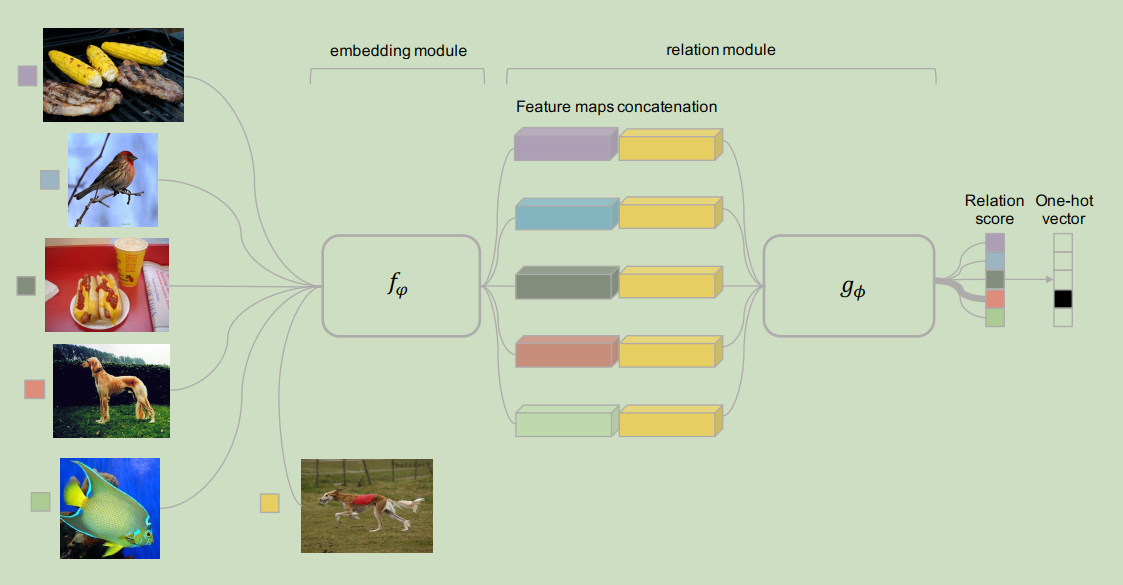

It looks like:

We should notice:

In order to calculate the relation score, feature maps concatenation is implemented.

However, from our article:

How to Incorporate External Knowledge in Recurrent Neural Networks (RNNs) for Text Classification

Concatenation may not be the best way to fuse features.

Gating may a better method.

Meanwhile, this relation network is similar to memory network.

Understand End-To-End Memory Networks

There is another way, if we do not have target embedding. How to use relation attention?

We can find a solution in paper:

Frame Attention Networks for Facial Expression Recognition in Videos