When we are building a neural network using pytorch or tensorflow, we will initialize weights randomly. In order to reproduce the model result, we need set a global random seed for python, numpy et al.

The Simplest Way to Reproduce Model Result in PyTorch – PyTorch Tutorial

A Beginner Guide to Get Stable Result in TensorFlow – TensorFlow Tutorial

However, the random seed can affect our neural network performance? Paper: Lexicon Integrated CNN Models with Attention for Sentiment Analysis discussed this topic.

In this paper, we can find:

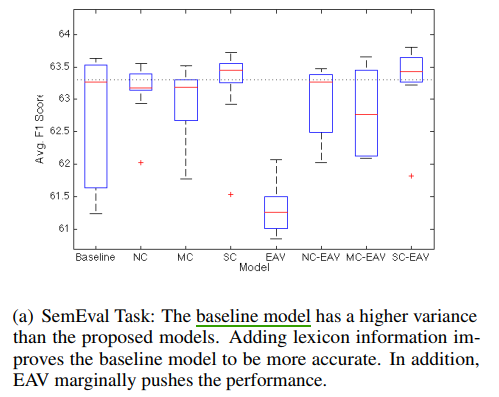

Different random seeds when training the CNN models could possibly change the behavior of models, sometimes by more than 1%. This is due to the randomness in deep learning, such as the random shuffling the datasets, initialization of the weights and drop-out rate of a tensor.

It means, if two models have two different accuracy, and the gap is less than 1%. The difference may caused by random seed.

Here is an example for 10 random seeds: