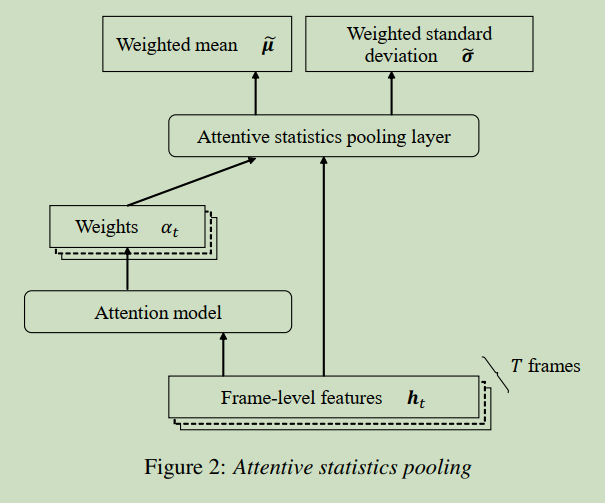

Attentive Statistics Pooling is proposed in paper: Attentive Statistics Pooling for Deep Speaker Embedding. In this article, we will introduce it for beginners.

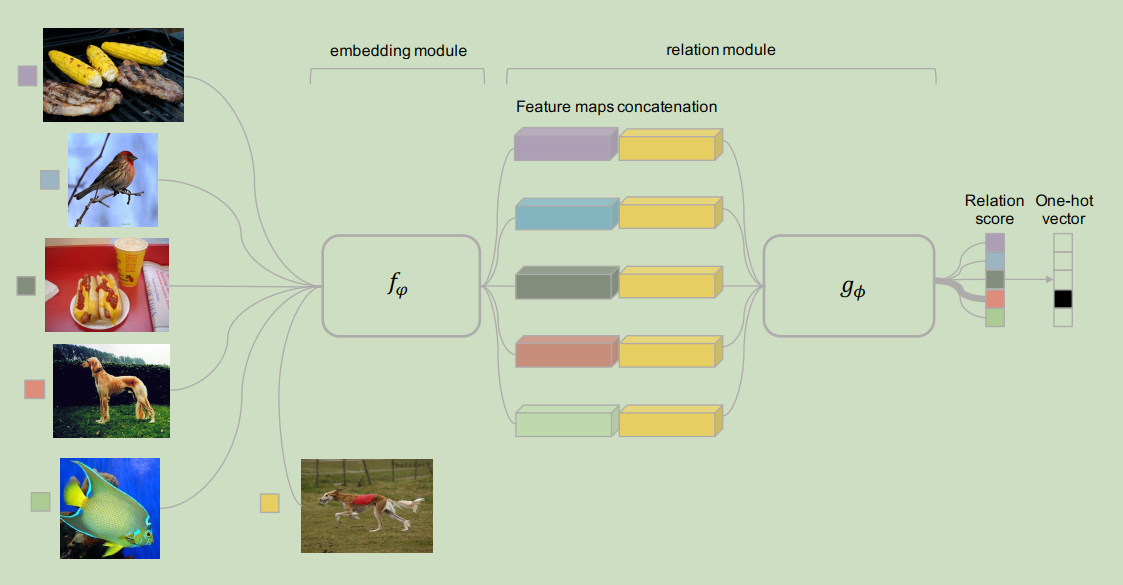

Relation attention network is proposed in paper: Learning to Compare: Relation Network for Few-Shot Learning. In this article, we will introduce it.

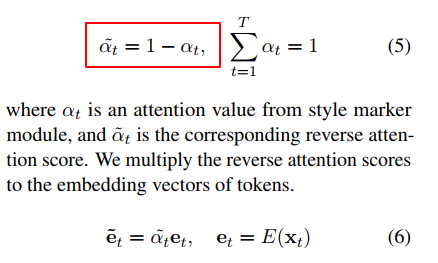

In this article, we will introduce what is reverse attention and how to use it in text style transfer task.

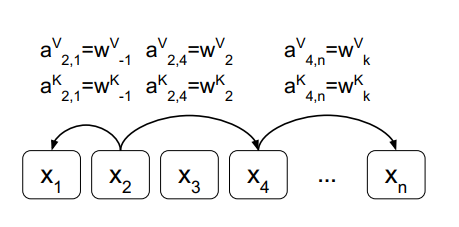

In this article, we will introduce how to computing self-attention with relative position representations in deep learning.

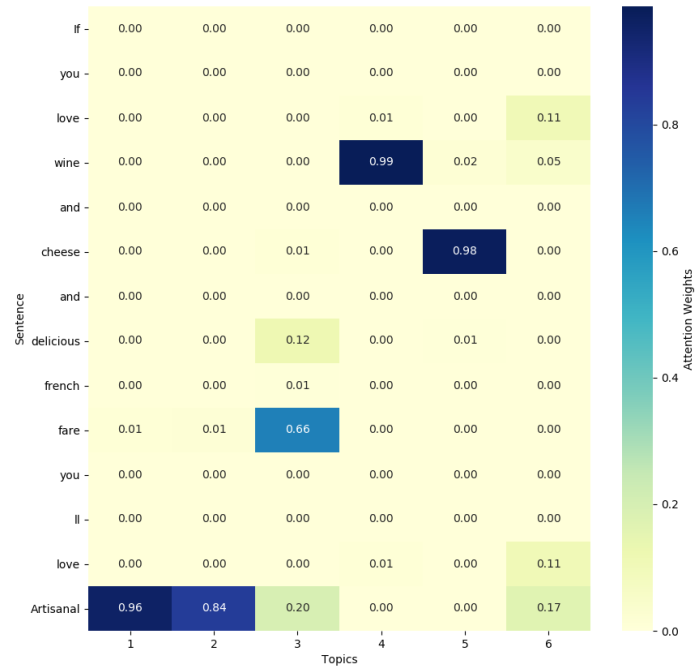

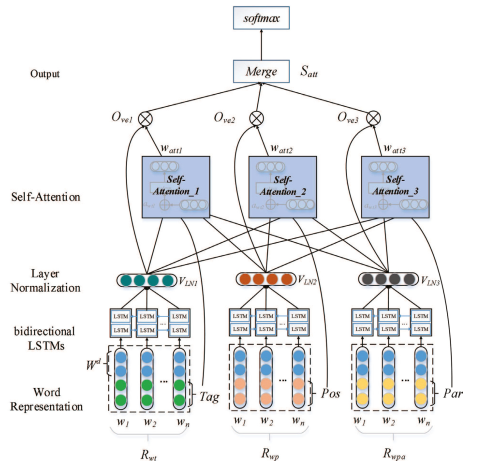

Topic attention is proposed in paper Aspect Category Detection via Topic-Attention Network, it can incorporate topic information in self-attention mechanism.

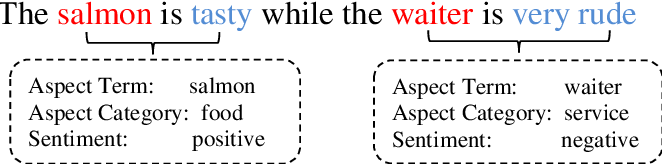

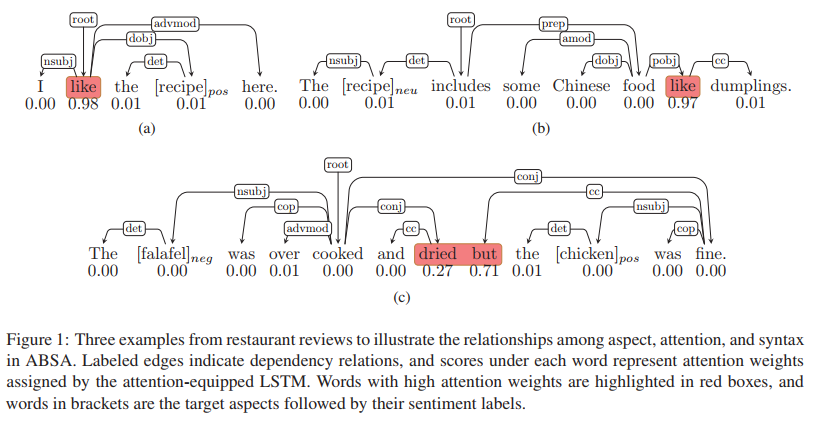

The heart of Aspect-based Sentiment Analysis (ABSA) task is how to connect aspects with their respective opinion words effectively. In order to get their connection, we can compute their coefficient.

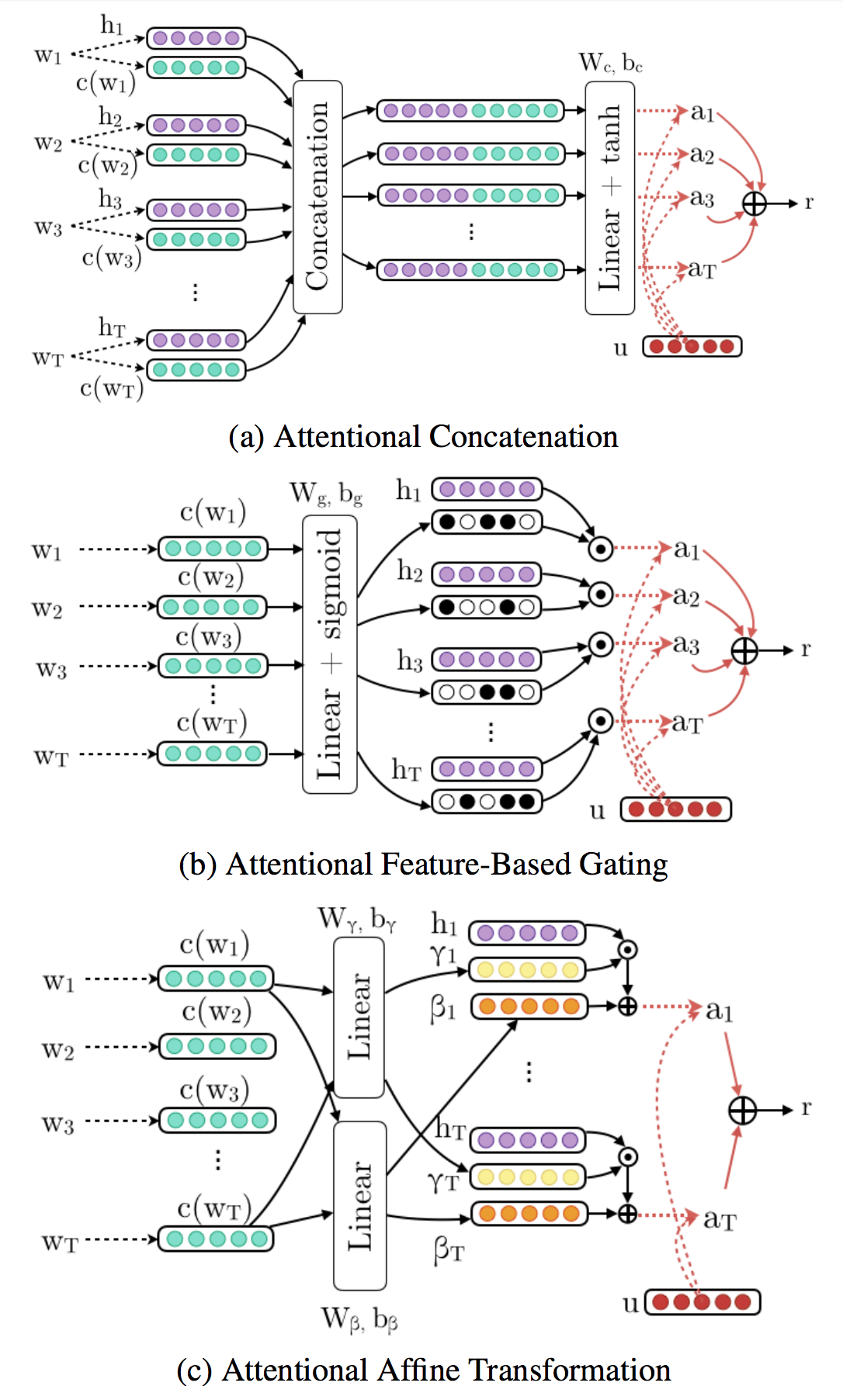

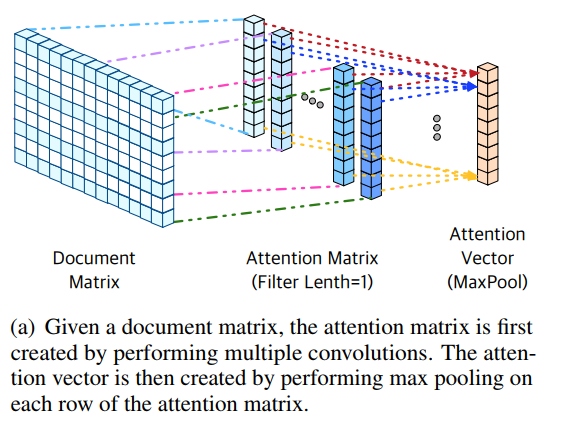

In this article, we will introduce three methods to incorporate external knowledge in recurrent neural networks (RNNs) for text classification.

Attention mechanism is widely used in deep learning. It has been proved that it can improve the performance of deep learning model.

There are a lot of linguistic knowledge and sentiment resources nowadays. For example: sentiment lexicons. We can add these resources to improve sentiment classification.

Embedding attention is proposed in paper: Lexicon Integrated CNN Models with Attention for Sentiment Analysis. In this note, we will introduce it.