In this article, we will introduce how to use negative supervision method in multitask text classification.

Problem in multitask text classification

In multitask text classification, similar texts may be assigned to different labels by different classifier. This problem may lead to lower performance in some cases.

Why use negative supervision in multitask text classification?

To address question above, we can use negative supervision method to make similar texts with different labels have distinct representations.

We can find this method in paper: Text Classification with Negative Supervision

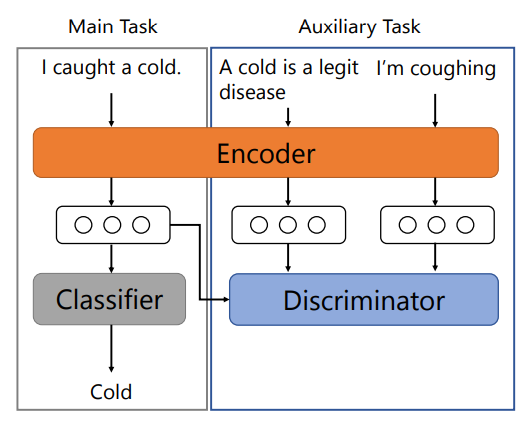

The architecture of this method is below:

From this paper, we can find this method outperforms the stateof-the-art pre-trained model on both singleand multi-label classifications, sentence and document classifications, and classifications in three different languages.

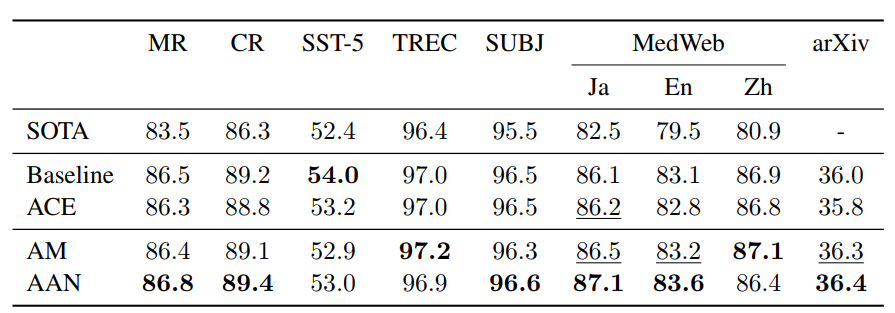

The experimental result is:

How to implement negative supervision in multitask text classification?

We can find a pytorch implementation in here.

You can run this code like:

python main.py